When CoreDNS Falls Silent : A Kubernetes DNS Disaster Story & The Playbook That Saved Us

A real-world incident narrative + definitive best practices for CoreDNS at scale

A real-world incident narrative + definitive best practices for CoreDNS at scale

Prologue: The Calm Before the Storm

The cluster was healthy. 312 pods spread across 24 nodes. CoreDNS two replicas, default settings, humming along since the cluster was provisioned eighteen months ago. Nobody had touched it. Nobody needed to touch it.

Until the Wednesday nobody expected.

Chapter 1 The Incident: "Why Is Payment Timing Out?"

It started with a Slack ping at 11:42 PM.

@oncall-alert

[CRITICAL]Payment service unreachable circuit breaker open on checkout-gateway

I SSH'd into the jump box. First instinct: kubectl get pods.

$ kubectl get pods -n production | grep payment

payment-svc-8d4f6b7c-x2k9m 1/1 Running 0 45d

order-processor-6c8d9f4-x7q2w 1/1 Running 0 45d

All pods running. All healthy.

$ kubectl get svc -n production

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S)

payment-service ClusterIP 10.102.144.200 <none> 8080/TCP

order-processor ClusterIP 10.102.145.88 <none> 8080/TCP

Services exist. IPs assigned. Then I did the obvious:

$ kubectl exec -it payment-svc-8d4f6b7c-x2k9m -n production -- curl -s http://10.102.145.88:8080/health

{"status":"ok"}

$ kubectl exec -it payment-svc-8d4f6b7c-x2k9m -n production -- nslookup order-processor.production.svc.cluster.local

;; connection timed out; no servers could be reached

IP works. DNS doesn't.

The entire payment pipeline was dead not because services were down, but because pods couldn't find each other by name. Every microservice call that relied on DNS resolution was failing. Idempotency queues were backing up. Retry storms were starting. It was 15 minutes before we declared it an SEV-2.

Chapter 2 The Interrogation: What Is CoreDNS Doing?

2.1 Logging Into CoreDNS

$ kubectl logs -n kube-system -l k8s-app=kube-dns --tail=200

The errors were telling:

[ERROR] plugin/kubernetes: Get "https://10.96.0.1:443/api/v1/namespaces/production/services/order-processor":

context deadline exceeded (Client.Timeout exceeded while awaiting headers)

[ERROR] plugin/forward:

2 errors occurred:

* read udp 10.244.1.8:53291->8.8.8.8:53: i/o timeout

* read udp 10.244.1.8:38472->8.8.4.4:53: i/o timeout

Two problems screaming simultaneously:

- CoreDNS couldn't talk to the Kubernetes API server fast enough (internal lookups failing)

- CoreDNS couldn't reach upstream DNS (external lookups timing out)

2.2 Checking CoreDNS Health

$ kubectl get pods -n kube-system -l k8s-app=kube-dns

NAME READY STATUS RESTARTS AGE

coredns-5d78c9fd5d-4kx2m 1/1 Running 0 182d

coredns-5d78c9fd5d-9vr3j 1/1 Running 0 182d

Pods were "Running." But running doesn't mean performing.

2.3 Measuring the Damage

# From a pod external DNS resolution

$ time nslookup google.com

Server: 10.96.0.10

Address: 10.96.0.10#53

;; connection timed out; no servers could be reached

;; → Total: 5 seconds of waiting, then failure

# Internal resolution same story

$ time nslookup order-processor.production.svc.cluster.local

Server: 10.96.0.10

Address: 10.96.0.10#53

;; connection timed out; no servers could be reached

Normal DNS resolution should take 1–5 milliseconds. We were at 5 seconds (timeout) or complete failure.

2.4 CPU Throttling The Hidden Killer

$ kubectl top pods -n kube-system -l k8s-app=kube-dns

NAME CPU(cores) MEMORY(bytes)

coredns-5d78c9fd5d-4kx2m 97m 168Mi

coredns-5d78c9fd5d-9vr3j 95m 172Mi

$ kubectl get deployment coredns -n kube-system -o jsonpath='{.spec.template.spec.containers[0].resources}'

{"limits":{"cpu":"100m","memory":"170Mi"},"requests":{"cpu":"75m","memory":"70Mi"}}

97m usage against 100m limit. Three millicores of headroom for a Go binary handling hundreds of queries per second. CoreDNS was CPU-throttled nearly continuously.

# Confirmed: throttling counters through the roof

kubectl debug -it -n kube-system coredns-5d78c9fd5d-4kx2m --image=busybox --target=coredns -- cat /sys/fs/cgroup/cpu.stat

nr_throttled 14832

throttled_time 294812005 # → 4.9 MINUTES of throttled time per minute!

The Go scheduler was starving. Goroutines queued, DNS queries backed up, timeouts cascaded.

2.5 The ndots Multiplier

Let's talk about the silent multiplier. Every pod in Kubernetes has a default DNS config inherited from the kubelet:

dnsConfig:

options:

- name: ndots

value: "5"

searches:

- default.svc.cluster.local

- svc.cluster.local

- cluster.local

- eu-west-1.compute.internal

ndots: 5 is a threshold, not a queue. Here's how it actually works:

- If a hostname has fewer than N dots, the resolver prepends search domains first, then tries the name as-is only if none of those succeed.

- If a hostname has N dots or more, the resolver tries it as an absolute name first, then falls through to search domains if it fails.

So with ndots: 5, when our application calls api.stripe.com (which has 2 dots fewer than 5):

| Attempt | Query Sent | Result |

|---|---|---|

| 1 | api.stripe.com.default.svc.cluster.local |

NXDOMAIN |

| 2 | api.stripe.com.svc.cluster.local |

NXDOMAIN |

| 3 | api.stripe.com.cluster.local |

NXDOMAIN |

| 4 | api.stripe.com.eu-west-1.compute.internal |

NXDOMAIN |

| 5 | api.stripe.com |

Resolved |

5 queries for 1 hostname. With 312 pods × average 8 external calls per startup = 12,480 DNS queries hitting CoreDNS. With ndots: 2, that's 2,496 queries. An 80% amplification caused by one setting.

Why

ndots: 2is the right production value: Internal Kubernetes service names follow the patternservice.namespace.svc.cluster.localthat's at minimum 2 dots (payment-service.production). Withndots: 2, names with 2+ dots are tried as absolute first (which is correct for FQDNs), while short names likeorder-processorstill get search domains prepended. External hostnames likeapi.stripe.com(2 dots) are tried absolute first no search domain penalty.

Chapter 3 The Diagnosis: Five Problems At Once

We'd found the killers. Not one, but five compounding failures:

Here's how these five problems compounded each other:

┌─────────────────────────────────────────────────────────────┐

│ COREDNS FAILURE CHAIN │

│ │

│ 1. ndots: 5 → 5x query amplification on externals │

│ ↓ │

│ 2. No node-local → Every query traverses the network │

│ cache to reach CoreDNS pods │

│ ↓ │

│ 3. Resources too → 97m/100m CPU = constant throttling │

│ tight → goroutines back up │

│ ↓ │

│ 4. Cache exhausted → Under load, cache evicts entries, │

│ under memory misses spike, more upstream queries │

│ ↓ │

│ 5. Static replica → No autoscaling, no PDB │

│ count → Single point of failure │

│ │

│ Result: 5s timeouts → Circuit breakers trip → OUTAGE │

└─────────────────────────────────────────────────────────────┘

Chapter 4 The Fix: Step by Step

We applied fixes in strict order each one built on the previous. Changing five things simultaneously is how you create a new mystery.

Step 1: Set ndots: 2 80% Load Reduction in 5 Minutes

The mechanism, stated precisely: With ndots: 2, any name with 2 or more dots is tried as an absolute name first (before search domains are appended). Names with fewer than 2 dots still use the search list first. In practice, this means:

| Name | Dots | Behavior under ndots: 2 |

|---|---|---|

order-processor |

0 | Search domains prepended first: order-processor.default.svc.cluster.local → resolves |

order-processor.prod |

1 | Search domains prepended first: order-processor.prod.default.svc.cluster.local → resolves |

order-processor.production.svc |

2 | Tried as absolute first (2 ≥ 2) → resolves or falls through to search list |

api.stripe.com |

2 | Tried as absolute first (2 ≥ 2) → resolves immediately on direct lookup |

api.example.com |

2 | Tried as absolute first → resolves directly, no search domain penalty |

# Pod-level configuration

spec:

dnsPolicy: ClusterFirst

dnsConfig:

options:

- name: ndots

value: "2"

To enforce ndots: 2 cluster-wide without editing every single Deployment YAML, you must use a Mutating Admission Webhook (like Kyverno or OPA Gatekeeper) to inject the dnsConfig block into pods as they are created.

Impact measurement:

Before: 312 pods × 8 calls × 5 retries = 12,480 external queries/min

After: 312 pods × 8 calls × 1 direct = 2,496 external queries/min

Reduction: 80%

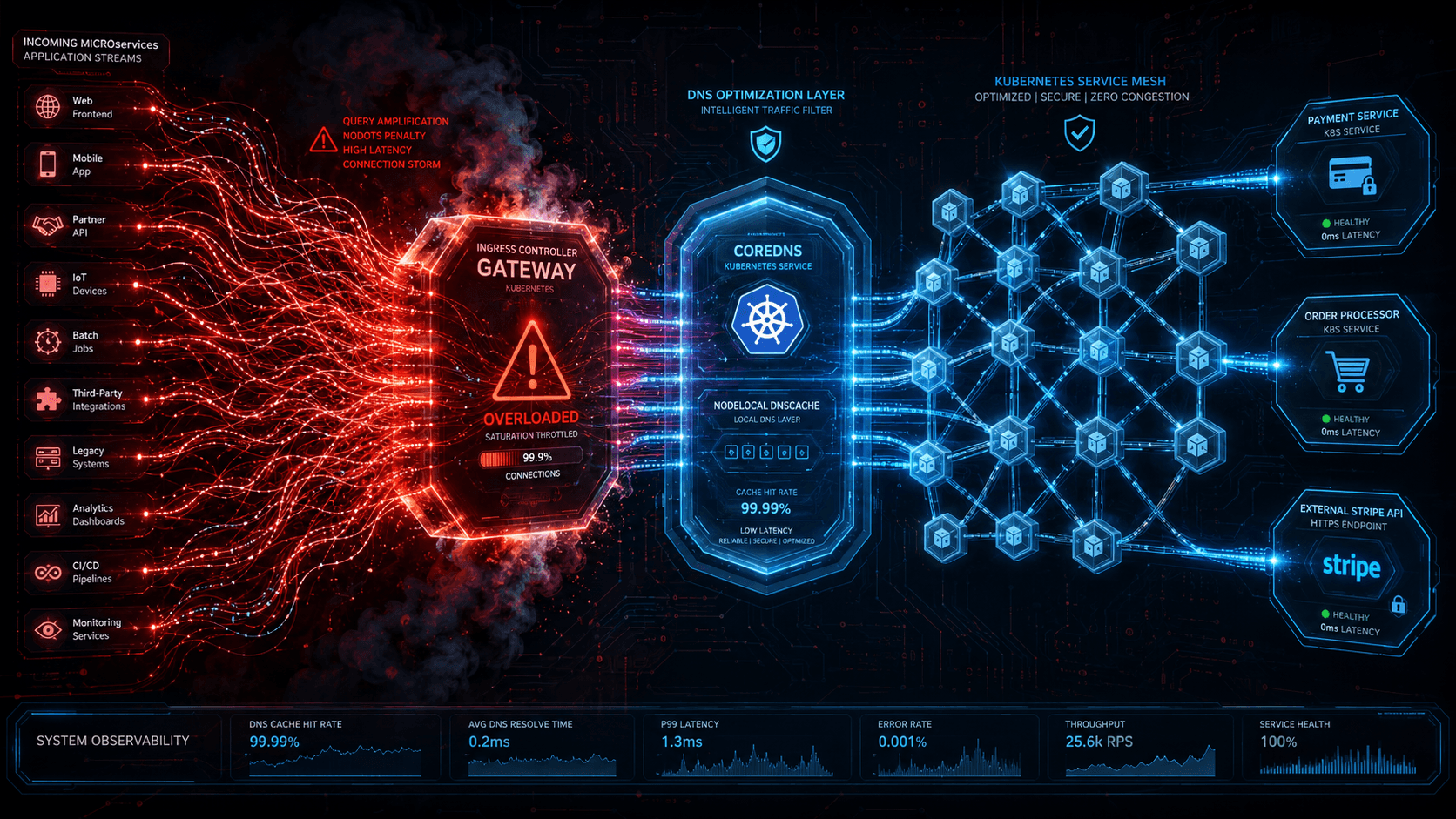

Step 2: Deploy NodeLocal DNSCache The Game Changer

Before you deploy the manifest, understand what changes architecturally:

What it is: A lightweight CoreDNS cache instance running as a DaemonSet on every node, bound to the link-local IP 169.254.20.10. Pods resolve DNS locally instead of sending queries across the cluster to CoreDNS pods.

The architecture shift:

BEFORE (today):

┌─────┐ ┌──────────────────────────────────────┐

│ Pod A│───▶│ kube-dns Service (ClusterIP: 10.96.0.10) │

└─────┘ └───────────┬──────────────────────────┘

│

┌───────────▼───────────┐

│ CoreDNS Pod (Node 3) │──▶ Upstream (8.8.8.8)

│ CoreDNS Pod (Node 7) │──▶ Upstream (8.8.4.4)

└───────────────────────┘

↑

Network hop on EVERY query

Cross-node traffic

CoreDNS pods are the bottleneck

AFTER (with NodeLocal DNSCache):

┌─────┐ ┌──────────────────────┐

│ Pod A│───▶│ Node Cache (169.254.20.10) │───▶ Cache hit → instant

└─────┘ │ (same node) │

└───────────┬──────────┘

│ (only on cache miss)

┌───────────▼───────────┐

│ CoreDNS Pod (any node)│──▶ Upstream (8.8.8.8)

└───────────────────────┘

No cross-node traffic for cached queries

CoreDNS load drops ~90%

Why this matters: For a large cluster, the majority of DNS queries are for services that haven't changed recently and can be cached. By keeping the cache local, you eliminate the network round-trip and reduce CoreDNS load simultaneously.

Deployment steps:

Step 2a: Test on a subset of nodes first (rollback safety)

Before deploying cluster-wide, validate on a small canary node group:

# Can beary subset deploy to specific nodes first

apiVersion: apps/v1

kind: DaemonSet

metadata:

name: nodelocaldns

namespace: kube-system

labels:

k8s-app: kube-dns

spec:

selector:

matchLabels:

k8s-app: kube-dns

template:

metadata:

labels:

k8s-app: kube-dns

spec:

priorityClassName: system-node-critical

serviceAccountName: nodelocaldns

hostNetwork: true

dnsPolicy: Default

tolerations:

- operator: Exists

# RESTRICT TO CANARY NODES FIRST:

nodeSelector:

node-role: worker-canary # Label only your test nodes

# Once validated, remove nodeSelector for full deployment

containers:

- name: node-cache

image: registry.k8s.io/dns/k8s-dns-node-cache:1.23.0

args:

- "-localip=169.254.20.10"

- "-conf=/etc/Corefile/Corefile"

- "-upstreamsvc=kube-dns-upstream"

resources:

requests:

cpu: 5m

memory: 5Mi

limits:

cpu: 50m

memory: 15Mi

ports:

- containerPort: 53

name: dns-udp

protocol: UDP

- containerPort: 53

name: dns-tcp

protocol: TCP

- containerPort: 9259

name: metrics

protocol: TCP

securityContext:

privileged: true

volumeMounts:

- name: config-volume

mountPath: /etc/Corefile

readOnly: true

- name: xtables-lock

mountPath: /run/xtables.lock

volumes:

- name: xtables-lock

hostPath:

path: /run/xtables.lock

type: FileOrCreate

- name: config-volume

configMap:

name: nodelocaldns

items:

- key: Corefile

path: Corefile

---

apiVersion: v1

kind: ConfigMap

metadata:

name: nodelocaldns

namespace: kube-system

data:

Corefile: |

cluster.local {

errors

cache {

success 99840 30 # Positive cache: up to ~28 hours

denial 30 # NXDOMAIN cache: 30 seconds

}

reload

forward . __PILLAR__CLUSTER__DNS__

}

in-addr.arpa {

errors

cache 30

reload

forward . __PILLAR__CLUSTER__DNS__

}

ip6.arpa {

errors

cache 30

reload

forward . __PILLAR__CLUSTER__DNS__

}

. {

errors

cache 30

reload

forward . __PILLAR__UPSTREAM__SERVERS__

}

Important: When using the official kubeadm deployment (

kubeadm init --feature-gates=NodeLocalDNSCache=true), the__PILLAR__placeholders are automatically substituted. For manual deployment, replace__PILLAR__CLUSTER__DNS__with the kube-dns ClusterIP (e.g.,10.96.0.10) and__PILLAR__UPSTREAM__SERVERS__with upstream resolvers (e.g.,8.8.8.8 8.8.4.4).

Rollback procedure:

# If issues arise on canary nodes:

# 1. Remove the DaemonSet pods revert to using kube-dns service immediately

kubectl delete daemonset nodelocaldns -n kube-system

# 2. Verify DNS is working again through the normal path

kubectl exec -it <pod> -- nslookup order-processor.production.svc.cluster.local

# Should resolve through kube-dns service again

# 3. Investigate and fix before re-deploying

If NodeLocal DNSCache intercepts all DNS traffic and the upstream is misconfigured, pods will experience resolution failures. The nodeSelector approach above lets you validate on a subset before cluster-wide rollout.

Step 3: Tune the Corefile Make CoreDNS Efficient

apiVersion: v1

kind: ConfigMap

metadata:

name: coredns

namespace: kube-system

data:

Corefile: |

.:53 {

errors

health {

lameduck 5s

}

ready

# Aggressive caching layer

cache {

success 99840 30 # Successful responses cached for ~28 hours

denial 60 # NXDOMAIN cached for 60s (prevents repeated failed lookups)

prefetch 120 1200 4 25 # Proactively refresh popular entries at 25% TTL remaining

}

# Kubernetes service discovery hardened

kubernetes cluster.local {

pods verified # Don't resolve pods that aren't in Running state

fallthrough in-addr.arpa ip6.arpa

ttl 30 # Lower TTL for faster cluster change propagation

}

# External upstream resilient configuration

forward . 8.8.8.8 8.8.4.4 1.1.1.1 {

max_concurrent 1000 # Prevent upstream saturation

prefer_tcp # TCP handles retries and large responses reliably

health_check 30s # Detect upstream failures quickly

policy random # Distribute load across upstreams

expire 10s # Retry interval for failed upstreams

serve_tcp # Support both protocols

serve_udp

}

# Allow runtime Corefile reload without pod restart

reload

# Metrics for monitoring

prometheus :9153

}

Key directives explained:

| Directive | Why It Matters |

|---|---|

max_concurrent 1000 |

Prevents a single upstream from being overwhelmed during bursts |

prefetch |

Proactively refreshes popular entries before TTL expires, preventing cache stampedes |

pods verified |

Avoids DNS entries for terminating pods prevents stale connections |

fallthrough |

Lets external resolvers handle non-Kubernetes names instead of failing |

prefer_tcp |

TCP handles large responses and retries better than UDP, reducing silent packet loss |

denial 60 |

Negative caching for 60s stops repeated NXDOMAIN lookups for non-existent names |

Step 4: Right-Size Resources Stop Starving the Go Runtime

Forget the default 100m CPU limit. CoreDNS is a Go binary with concurrent goroutines servicing all cluster DNS. The official CoreDNS scaling benchmarks show:

Single CoreDNS replica on 2 vCPU node:

- Internal queries: 33,669 QPS (2.6ms latency)

- External queries: 6,733 QPS (12ms latency, client perspective)

- At this load, both vCPUs were pegged at ~1900m

Memory formula (from CoreDNS Scaling Guide):

Memory (default settings) = (Pods + Services) / 1000 + 54 MB

| Cluster Scale | Pods + Services | Memory Needed |

|---|---|---|

| Small (< 50 pods) | ~60 | ~55 Mi |

| Medium (500 pods) | ~600 | ~59 Mi |

| Large (5000 pods) | ~6,000 | ~64 Mi |

| XLarge (150K pods) | ~158,000 | ~212 Mi |

# Resource requests/limits no more starving the Go runtime

resources:

requests:

cpu: "200m"

memory: "150Mi"

limits:

cpu: "500m"

memory: "250Mi"

After this change in our cluster:

Before: throttled_time = 294 seconds/minute (97% of time throttled)

After: throttled_time = 0 seconds/minute

Step 5: Deploy CPA Cluster Proportional Autoscaler

This is not regular HPA. The Cluster Proportional Autoscaler is specifically designed for infrastructure add-ons like CoreDNS that need to scale proportionally with cluster size. Unlike HPA, which requires a metrics pipeline and custom metrics API, CPA watches node count and adjusts replicas via a simple formula.

The formula:

replicas = max(ceil(nodes / nodesPerReplica), ceil(cores / coresPerReplica))

With a floor of 2 when preventSinglePointFailure: true.

apiVersion: v1

kind: ConfigMap

metadata:

name: coredns-cpa-config

namespace: kube-system

data:

linear: |

{"coresPerReplica": 128, "nodesPerReplica": 4, "preventSinglePointFailure": true}

Scaling examples (annotated which constraint is binding):

| Nodes | Cores | Calculation | Replicas | Binding Constraint |

|---|---|---|---|---|

| 8 | 16 | max(8/4, 16/128) = max(2, 0.125) | 2 | nodesPerReplica (2 > 0.125). Also hits preventSinglePointFailure floor of 2. |

| 24 | 48 | max(24/4, 48/128) = max(6, 0.375) | 6 | nodesPerReplica (6 > 0.375) |

| 100 | 200 | max(100/4, 200/128) = max(25, 1.56) | 25 | nodesPerReplica (25 > 1.56) |

In every example above, nodesPerReplica is the binding constraint the CPU-based calculation produces a value below 1, so it doesn't contribute. This is typical for CoreDNS, which is more sensitive to the number of nodes (and therefore the number of NodeLocal DNSCache instances generating upstream queries) than to raw cluster compute.

Cloud vendor endorsement: Oracle OKE, AWS EKS, and Azure AKS all recommend CPA for CoreDNS autoscaling. EKS Best Practices explicitly states: "It's recommended you use NodeLocal DNS or the cluster proportional autoscaler to scale CoreDNS."

# Deploy CPA via Helm

helm install coredns-cpa cluster-proportional-autoscaler \

--repo https://kubernetes-sigs.github.io/cluster-proportional-autoscaler \

--namespace kube-system \

--set rbac.create=true \

--set image.tag=v1.12.0 \

--set defaultRequests.cpu=100m \

--set defaultRequests.memory=70Mi \

--set defaultLimits.cpu=500m \

--set defaultLimits.memory=250Mi \

--set configMap=coredns-cpa-config

Step 6: PodDisruptionBudget Never Take All DNS Down at Once

apiVersion: policy/v1

kind: PodDisruptionBudget

metadata:

name: coredns-pdb

namespace: kube-system

spec:

minAvailable: 2 # Never fewer than 2 running CoreDNS pods

selector:

matchLabels:

k8s-app: kube-dns

Without a PDB, a node drain or rolling update can kill all CoreDNS replicas simultaneously instant cluster-wide DNS blackout. This is especially dangerous when using NodeLocal DNSCache, because the node-local caches still forward misses to CoreDNS pods. If all CoreDNS pods are evicted, every cache miss fails.

Chapter 5 Verification: Did It Work?

# 1. DNS resolution speed should be <5ms now

$ time nslookup google.com

real 0m0.003s # ← was 5 seconds before

$ time nslookup order-processor.production.svc.cluster.local

real 0m0.002s # ← was timing out before

# 2. CoreDNS resource usage should have massive headroom

$ kubectl top pods -n kube-system -l k8s-app=kube-dns

NAME CPU(cores) MEMORY(bytes)

coredns-5d78c9fd5d-4kx2m 28m 88Mi # ← was 97m/100m

# 3. CPU throttling should be zero

kubectl debug -it -n kube-system coredns-5d78c9fd5d-4kx2m --image=busybox --target=coredns -- cat /sys/fs/cgroup/cpu.stat

nr_throttled 0

throttled_time 0 # ← was 294 seconds/minute

# 4. Node-local cache hit rate should be >90%

$ kubectl exec -n kube-system -l k8s-app=kube-dns -- curl -s localhost:9153/metrics | grep cache

coredns_cache_hits_total 48291

coredns_cache_misses_total 4892

# Hit ratio: 90.7%

# 5. CPA working verify replicas scaled

$ kubectl describe deployment coredns | grep Replicas

Replicas: 6 (desired)

Chapter 6 Monitoring: No More Surprises

# Prometheus alerting rules add to your rules file

groups:

- name: coredns.rules

interval: 30s

rules:

# ALERT: CoreDNS error rate > 5% for 3 minutes

- alert: CoreDNSHighErrorRate

expr: |

(

rate(coredns_dns_requests_total{rcode=~"SERVFAIL|REFUSED|TIMEOUT"}[5m])

/

rate(coredns_dns_requests_total[5m])

) > 0.05

for: 3m

labels:

severity: critical

annotations:

summary: "CoreDNS error rate is {{ $value | humanize }}"

# ALERT: DNS resolution slow (p99 > 50ms)

- alert: CoreDNSHighLatency

expr: |

histogram_quantile(0.99,

rate(coredns_dns_request_duration_seconds_bucket[5m])

) > 0.05

for: 5m

labels:

severity: warning

annotations:

summary: "CoreDNS p99 latency {{ $value }}s"

# ALERT: Cache efficiency dropping

- alert: CoreDNSCacheHitRateLow

expr: |

(

rate(coredns_cache_hits_total[10m])

/

(rate(coredns_cache_hits_total[10m]) + rate(coredns_cache_misses_total[10m]))

) < 0.80

for: 10m

labels:

severity: warning

annotations:

summary: "CoreDNS cache hit ratio below 80%"

# ALERT: CPU throttling detected

- alert: CoreDNSCPUThrottling

expr: |

rate(container_cpu_cfs_throttled_periods_total{container="coredns"}[5m]) > 0

for: 2m

labels:

severity: warning

annotations:

summary: "CoreDNS pod {{ $labels.pod }} is being CPU throttled"

# ALERT: CoreDNS pod restarting

- alert: CoreDNSPodRestarts

expr: |

increase(kube_pod_container_status_restarts_total{container="coredns"}[1h]) > 3

for: 10m

labels:

severity: warning

Chapter 7 The Playbook: TL;DR Checklist

╔══════════════════════════════════════════════════════════════╗

║ COREDNS PRODUCTION HARDENING CHECKLIST ║

╠══════════════════════════════════════════════════════════════╣

║ □ 1. Set ndots: 2 (immediate 80% query reduction) ║

║ □ 2. Deploy NodeLocal DNSCache (game changer for clusters ║

║ with >50 pods test on canary nodes first, then ║

║ roll out cluster-wide) ║

║ □ 3. Tune Corefile: cache, prefetch, max_concurrent, ║

║ prefer_tcp, pods verified ║

║ □ 4. Right-size CPU limits (min 200m request, 500m limit ║

║ Go needs breathing room) ║

║ □ 5. Deploy CPA (Cluster Proportional Autoscaler) ║

║ NOT regular HPA scales with cluster size ║

║ □ 6. Set PodDisruptionBudget (minAvailable: 2) ║

║ □ 7. Add monitoring alerts (error rate, latency, ║

║ cache hit ratio, CPU throttling) ║

║ □ 8. Test with: kubectl exec <pod> -- nslookup ║

║ <service> && verify <5ms, zero errors ║

╚══════════════════════════════════════════════════════════════╝

Priority order do them in this sequence:

ndots: 2→ 5 minutes, 80% of the problem solved- NodeLocal DNSCache → 15 minutes, eliminates cross-node traffic (test on canary nodes first)

- Resource increase → 5 minutes, un-throttles the Go runtime

- Corefile tuning → 10 minutes, cache + prefetch + failover

- CPA + PDB → 20 minutes, future-proofs against cluster growth

- Monitoring → 30 minutes, prevents the next incident

Epilogue

That Wednesday night, we applied Steps 1–4 between 12:00 AM and 12:47 AM. DNS resolution went from 5-second timeouts to 2-millisecond responses. We deployed CPA the following day. The cluster hasn't had a DNS-related incident since.

DNS is boring until it breaks, and when it breaks, everything breaks. CoreDNS isn't a set-and-forget service at scale, it's critical infrastructure that demands deliberate sizing, caching strategy, and proportional autoscaling. The Cluster Proportional Autoscaler exists specifically because kubectl scale deployment coredns --replicas=X doesn't scale when your cluster goes from 24 nodes to 240.

Monitor it. Size it. Cache it. Scale it.

Your 3 AM pager will thank you. 🌙

References

- CoreDNS Scaling Guide (GitHub)

- NodeLocal DNSCache Kubernetes Official Docs

- EKS Best Practices Scaling Cluster Services

- Cluster Proportional Autoscaler (kubernetes-sigs)

- Improving DNS Performance with NodeLocalDNS Neon Engineering Blog

- Oracle OKE Large Cluster Best Practices

- CoreDNS Kubernetes Plugin Documentation

- CoreDNS Cache Plugin Documentation